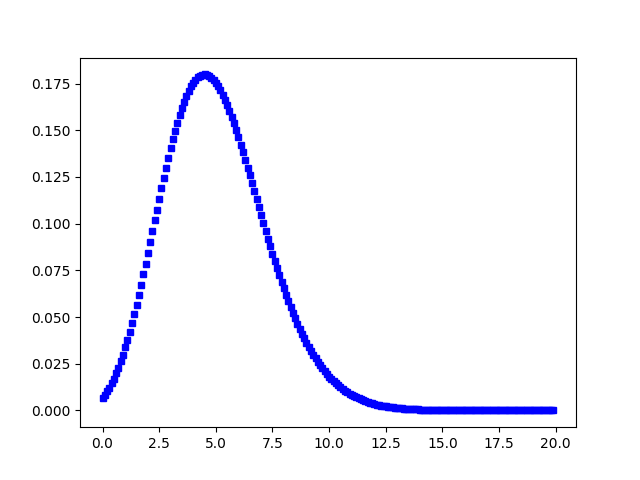

from future import division from matplotlib import pyplot as plt import numpy as np from scipy import stats import seaborn as sns from import GenericLikelihoodModel np.random. Therefore even the chance that you would have one sample out of 10000 above 21 is less than 5.5e-12. plot ( new_x, best_pdf_scaled, label = best_name ) plt. The probability mass function of the zero-inflated Poisson distribution is shown below, next to a normal Poisson distribution, for comparison.

#SCIPY STATS POISSON PDF#

pdf ( new_x, * best_params, loc = best_params, scale = best_params ) best_pdf_scaled = best_pdf * bin_width * N ax. linspace ( x_mid - ( bin_width * 2 ), x_mid + ( bin_width * 2 ), 1000 ) best_pdf = best_dist. array ( array )) if plot_best_fit : new_x = np. iloc best_dist = getattr ( st, best_name ) best_params = best_dist. sort_values ( by = 'SSE' ) best_name = results.

set_ylabel ( 'y label' ) # Things to return - df of SSE and distribution name, the best distribution and its parameters results = pd. legend ( loc = 1 ) # CHANGE THIS IF REQUIRED ax. plot ( x_mid, pdf_scaled, label = name ) if plot_all_fits : plt. append () # Not strictly necessary to plot, but pretty patterns if plot_all_fits : ax. pdf ( x_mid, loc = loc, scale = scale, * arg ) pdf_scaled = pdf * bin_width * N # to go from pdf back to counts need to un-normalise the pdf sse = np. array ( array )) arg = params loc = params scale = params pdf = dist. array ( array ), bins = bins, color = 'w' ) # loop through the distributions and store the sum of squared errors # so we know which one eventually will have the best fit sses = for dist in tqdm ( DISTRIBUTIONS ): name = dist.

roll ( x, - 1 )) / 2.0 # go from bin edges to bin middles # selection of available distributions # CHANGE THIS IF REQUIRED DISTRIBUTIONS = if plot_hist : fig, ax = plt. array ( array ), bins = bins ) # Some details about the histogram bin_width = x - x N = len ( array ) x_mid = ( x + np. """ if plot_best_fit or plot_all_fits : assert plot_hist, "plot_hist must be True if setting plot_best_fit or plot_all_fits to True" # Returns un-normalised (i.e. best fit first) best_name - string with the name of the best fitting distribution best_params - list with the parameters of the best fitting distribution. Modify the "CHANGE IF REQUIRED" comments! Input: array - array-like input bins - number of bins wanted for the histogram plot_hist - boolean, whether you want to show the histogram plot_best_fit - boolean, whether you want to overlay the plot of the best fitting distribution plot_all_fits - boolean, whether you want to overlay ALL the fits (can be messy!) Returns: results - dataframe with SSE and distribution name, in ascending order (i.e. Can also choose to plot the array's histogram along with the computed fits. Discrete random variables are defined from a standard form and may require some shape parameters to complete its specification.

Returns the sum of squared error (SSE) between the fits and the actual distribution. < object at 0x7fe7c49e1978> A Poisson discrete random variable.Def fit_scipy_distributions ( array, bins, plot_hist = True, plot_best_fit = True, plot_all_fits = False ): """ Fits a range of Scipy's distributions (see scipy.stats) against an array-like input.